EU AI Act: Transparency Requirements Explained

Overview of EU AI Act transparency: disclosure rules for chatbots and AI content, documentation standards, timelines, and penalties.

EU AI Act: Transparency Requirements Explained

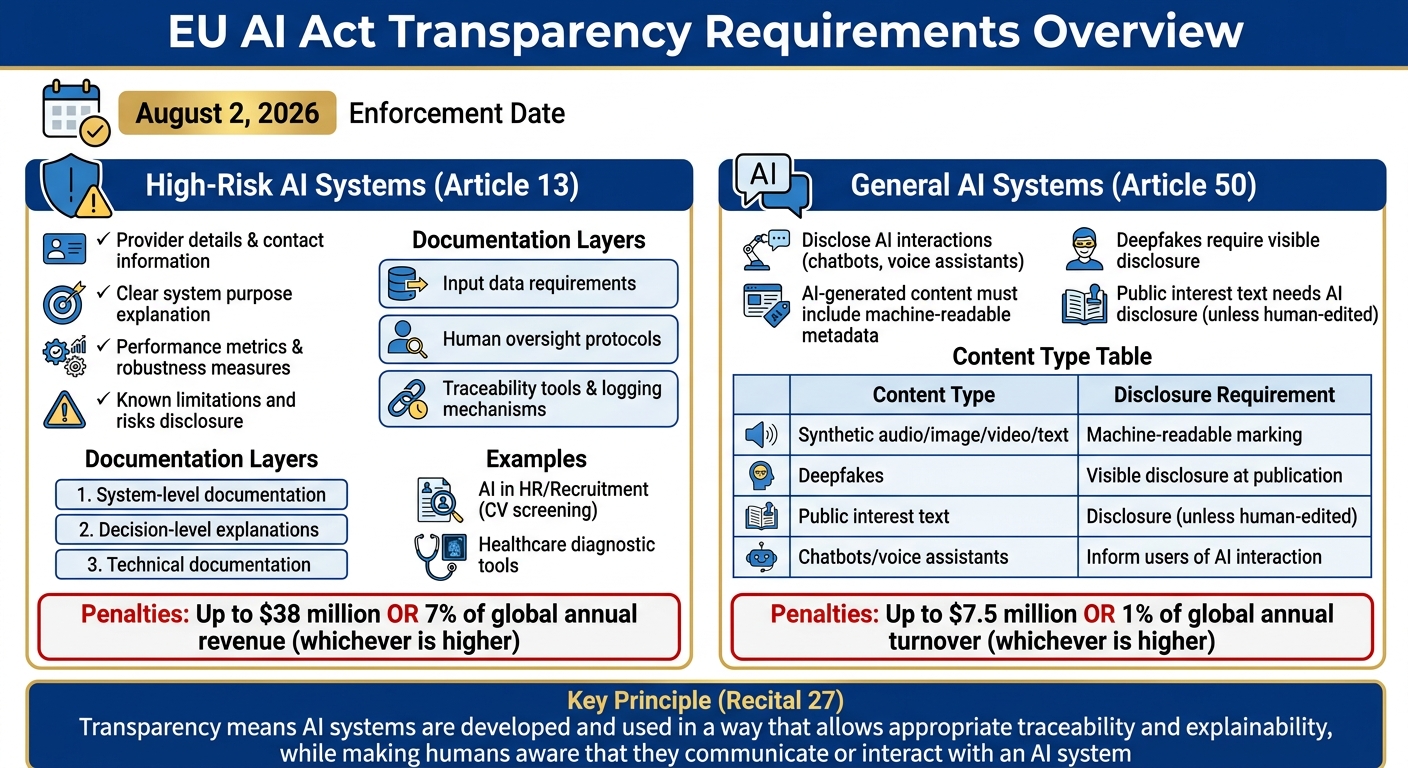

The EU AI Act is the world's first legal framework for regulating artificial intelligence, with a strong focus on transparency. It introduces strict requirements for high-risk AI systems, emphasizing traceability, explainability, and user awareness. Here's what you need to know:

-

Key Transparency Rules:

- High-risk AI systems must provide clear documentation, including system purpose, performance metrics, limitations, and operational details.

- Users must be informed when interacting with AI systems (e.g., chatbots, voice assistants).

- AI-generated content, like deepfakes, must include visible disclosure or metadata.

-

Penalties for Noncompliance:

- Fines up to $38 million or 7% of global annual revenue for high-risk systems.

- For general AI systems, fines can reach $7.5 million or 1% of global turnover.

-

Timeline:

- Most provisions take effect on August 2, 2026.

-

Documentation Standards:

- Providers must maintain layered documentation (e.g., system-level info, decision explanations) and detailed technical files.

The Act aims to reduce risks like automation bias and misuse while ensuring accountability for AI developers and deployers, often requiring an EU AI Act Copilot to navigate complex requirements. Compliance isn't optional - it's necessary to avoid penalties and build trust in AI systems.

::: @figure  {EU AI Act Transparency Requirements: High-Risk vs General AI Systems Compliance Overview}

:::

{EU AI Act Transparency Requirements: High-Risk vs General AI Systems Compliance Overview}

:::

Brando Benifei on Balancing Transparency, IP and Enforcement in the EU AI Act | RegulatingAI Podcast

::: @iframe https://www.youtube.com/embed/f62JIPXT8Cs :::

Transparency Requirements for High-Risk AI Systems (Article 13)

Article 13 of the EU AI Act outlines specific transparency obligations for providers of high-risk AI systems. These requirements aim to ensure that such systems are designed with enough clarity for deployers to interpret their outputs and use them responsibly. While providers aren’t required to disclose proprietary code, they must share key operational details and explain system limitations.

User Information Requirements

High-risk AI systems must come with clear, accessible instructions that are easy to understand and available digitally. These instructions should include:

- Provider details: The identity and contact information of the provider.

- Purpose: A clear explanation of the system’s intended use.

- Performance details: Information on performance metrics, robustness measures, and tested cybersecurity standards.

- Known limitations and risks: A discussion of potential risks, including those related to health, safety, or fundamental rights, as well as risks from foreseeable misuse.

- Operational specifics: Details about input data requirements, human oversight protocols, computational needs, system lifespan, and maintenance schedules.

- Traceability tools: Mechanisms for logging, storing, and interpreting system data to ensure accountability.

In addition to user instructions, providers must follow stringent documentation practices to meet the Act’s EU AI Act transparency standards.

Documentation Standards

The EU AI Act doesn’t prescribe a single format for documentation but emphasizes a layered approach to meet the needs of various stakeholders:

- System-level documentation: Covers the system’s overall behavior, intended purpose, and known failure modes.

- Decision-level explanations: Focuses on the factors influencing individual outcomes and the confidence levels associated with them.

- Technical documentation: Offers detailed insights into the model’s architecture and validation processes.

The goal is to provide explanations tailored to the needs of different users. For example, tools like SHAP or LIME can offer localized insights for complex models. The depth of explanation will vary depending on the stakeholder - what a medical professional needs to know about a diagnostic tool might differ significantly from what a consumer needs to understand about a credit score.

This multi-layered documentation approach is critical for ensuring transparency in high-risk AI applications.

High-Risk AI Use Case Examples

Here’s how these transparency requirements play out in real-world scenarios:

- AI in HR/Recruitment: Systems used for CV screening or interview scoring must provide documentation explaining how candidate rankings are determined, how the system performs across diverse applicant groups, and the safeguards in place to prevent bias.

- Healthcare diagnostic tools: Hospitals using AI for diagnostics need information on the tool’s accuracy across different patient demographics, the types of medical imaging it was trained on, situations requiring human review, and how to interpret confidence scores.

For example, a hospital deploying an AI diagnostic tool should understand the system’s accuracy across various patient groups and receive guidance on when human intervention is necessary. Similarly, an organization using AI for hiring decisions must be able to assess the system’s fairness and effectiveness across diverse applicant profiles while ensuring compliance with anti-discrimination standards.

General Transparency Rules for AI Systems (Article 50)

Article 50 lays out transparency requirements that apply to a wide range of AI systems, going beyond just high-risk applications. These rules cover AI systems that interact with people, generate content, or perform emotion recognition. Starting August 2, 2026, non-compliance could lead to fines of up to $7.5 million or 1% of global annual turnover, whichever is higher [8].

Recital 27 of the EU AI Act defines transparency as follows:

"Transparency means that AI systems are developed and used in a way that allows appropriate traceability and explainability, while making humans aware that they communicate or interact with an AI system, as well as duly informing deployers of the capabilities and limitations of that AI system and affected persons about their rights." [1]

Disclosing AI Interactions

Whenever an AI system interacts directly with users - like chatbots, voice assistants, or customer service tools - users must be informed they are engaging with AI. This disclosure should happen no later than the first interaction and must be clear and accessible [4][10]. There’s an exception for cases where it’s "obvious from the circumstances" that the interaction involves AI, but this is a narrow exception. As Abhishek G Sharma, Founder & CEO of Move78 International Limited, advises:

"The exception [for obvious AI] is narrow... My recommendation: disclose anyway. The cost of a small notice is zero. The cost of getting the 'obvious' judgment wrong is up to $7.5 million." [8]

For best practices, use a two-layer approach: include a persistent visual indicator (like an "AI Assistant" badge) and provide an explicit notice during the first interaction. Avoid burying this information in the Terms of Service - it won’t meet the "clear and distinguishable" requirement [9]. Also, ensure compliance with EU accessibility standards to make this disclosure universally understandable.

AI-Generated Content Disclosure

If your AI system generates audio, images, video, or text, it must include machine-readable metadata using standards like XMP, IPTC, or cryptographic provenance systems such as C2PA [8]. For deepfakes, clear labeling as artificially generated is mandatory at the time of publication [7].

A notable example of why this is crucial occurred in February 2026, when German public broadcaster ZDF's "heute journal" aired an AI-generated video showing ICE officers detaining a woman and children. The video was used to illustrate a U.S. immigration report, highlighting the importance of clear visual marking standards [12].

For text related to public interest topics, AI involvement must be disclosed unless the content has undergone thorough human review with documented editorial oversight procedures. A simple or superficial review won’t qualify for this waiver [12].

| AI Content Type | Who's Responsible | What's Required |

|---|---|---|

| Synthetic audio/image/video/text | Provider | Machine-readable marking (metadata/watermarks) |

| Deepfakes | Deployer | Visible disclosure at publication |

| Public interest text | Deployer | Disclosure of AI generation (unless human-edited) |

| Chatbots/voice assistants | Provider | Design system to inform users of AI interaction |

Some exceptions exist. For example, AI performing assistive functions like spell-checking doesn’t need labeling. Similarly, artistic or satirical works may have more flexibility, though the presence of AI-generated content should still be disclosed in a way that doesn’t disrupt the user experience [7].

To meet transparency requirements, adopt a multi-layered marking strategy. This could include digitally signed metadata, invisible watermarks, and detailed system logs [11][12]. Additionally, ensure your content pipelines retain AI provenance metadata instead of stripping it. Keeping a transparency register to document how and when disclosure mechanisms are used can serve as a critical safeguard if regulators investigate , a process that can be streamlined using an AI compliance policy assistant [8]. These measures collectively uphold transparency, ensuring that users remain informed about AI interactions and outputs.

How to Comply with Transparency Requirements

Meeting the EU AI Act compliance requirements for transparency involves more than just ticking boxes. Organizations need to evaluate their systems, create thorough documentation, and maintain evidence that can hold up under regulatory examination. These steps ensure accountability throughout the AI system's lifecycle. With enforcement of high-risk AI requirements starting on August 2, 2026, and penalties reaching up to $35 million or 7% of global annual turnover for severe violations [5], compliance is not optional - it's essential.

Running Transparency Assessments

Start by identifying your stakeholders - this includes end-users, system operators, auditors, and developers - and ensure your AI systems provide the level of transparency each group requires [6]. For instance, while a doctor using diagnostic AI may need detailed technical explanations, a bank officer reviewing loan applications might require less complex insights. For advanced models, consider tools like SHAP or LIME to explain how specific decisions are made rather than just presenting the outcomes [6].

Before deploying high-risk AI systems, complete a Fundamental Rights Impact Assessment (FRIA) [3]. This process identifies potential effects on fundamental rights and documents the steps needed to mitigate risks. Additionally, maintain automatically generated logs for at least six months to ensure traceability [3].

Once your assessments confirm that your system meets transparency standards, shift your focus to creating and maintaining agile documentation.

Creating and Updating Documentation

Under Article 11 and Annex IV of the EU AI Act, you must maintain a Technical File that demonstrates compliance with all obligations [13]. This isn't a one-time task - your documentation needs to evolve alongside your system. Adopting a continuously updated documentation process ensures that your records remain current as your AI system changes.

Templates like Model Cards or AI FactSheets can help you create consistent, comprehensive documentation [6]. These should include details such as provider identity, system characteristics, known limitations, input data specifications, human oversight measures, and maintenance requirements [2].

To keep your documentation aligned with system updates, implement an automated pipeline that updates records with every model retraining. For AI-generated content, use machine-readable labels following standards like XMP, IPTC, or C2PA. Additionally, maintain an "Evidence Vault" to store immutable audit trails [13].

Using ISMS Copilot for Compliance

To simplify compliance efforts, consider tools like ISMS Copilot. This platform provides AI-powered support tailored to transparency requirements. It covers the EU AI Act and over 50 other frameworks, making it especially useful for organizations juggling multiple compliance needs.

By uploading your AI documentation (PDF, DOCX, or XLS files up to 5MB), ISMS Copilot can identify gaps and create prioritized remediation plans to address missing elements in your transparency records [14]. For more targeted results, include specific references in your prompts, such as "Article 13 instructions for use" or "Article 50 transparency rules."

When crafting disclosure text for AI-generated content or interactions, ISMS Copilot can generate language that aligns with the EU AI Act based on your use case. The platform allows you to tailor prompts by including details like your AI system's risk level, deployment model, and purpose, ensuring the documentation fits your specific needs.

Additionally, ISMS Copilot’s workspace feature lets you manage EU AI Act projects separately from other compliance frameworks. This is particularly helpful for consultants working with multiple clients. The Free plan allows for 10 document uploads per month, while the Plus plan ($24/month) increases this limit [14].

Conclusion

Transparency is the cornerstone of trust and accountability in AI technologies. As Recital 27 of the Act explains:

"Transparency means that AI systems are developed and used in a way that allows appropriate traceability and explainability, while making humans aware that they communicate or interact with an AI system" [1].

This core idea influences everything - whether it's documenting the limitations of high-risk systems or ensuring chatbots clearly reveal their AI identity.

Throughout this article, we've seen how transparency requirements address critical challenges. They help counter automation bias, give users the tools to understand AI-driven decisions, and ensure these systems stay within their intended scope [3].

For organizations, transparency isn't just a box to check - it needs to be woven into every layer of their AI systems. As Sacha Wilson and Jacky Lai from Harbottle & Lewis put it:

"The trajectory is unmistakable: the Act positions transparency as a core principle, which is going to impact design choices, user interfaces and governance processes" [3].

By embedding transparency into their processes - through clear documentation, meaningful disclosures, and strong human oversight - companies can align with regulatory expectations while maintaining their foothold in the EU market.

Additionally, thorough documentation doesn't just shield organizations from penalties; it also builds trust. Jasper Claes from Unorma highlights:

"Documented compliance effort is a formal mitigating factor under the Act" [13].

FAQs

::: faq

How do I know if my AI system is “high-risk” under the EU AI Act?

To figure out if your AI system qualifies as "high-risk" under the EU AI Act, examine whether it functions in sectors such as critical infrastructure, biometric identification, or education. You'll also need to evaluate its potential to cause significant harm. High-risk systems are outlined in Annex III and Articles 9–15 of the Act, with compliance deadlines set for August 2, 2026. :::

::: faq

What counts as a compliant AI disclosure for chatbots and voice assistants?

A compliant AI disclosure, as required by the EU AI Act, ensures users are clearly informed when they are interacting with an AI system. This includes providing straightforward details about what the system can and cannot do, so users understand its capabilities and limitations.

Transparency is also key. The disclosure should explain how the AI system makes decisions, offering insights into its processes. Moreover, any content generated by AI must be clearly labeled in a machine-readable format, making it easier to identify and ensuring accountability. :::

::: faq

Do I have to label AI-generated text if a human edited it?

Even if AI-generated content is later edited by a human, it must still be labeled as AI-generated according to the EU AI Act. The law mandates that users are informed whenever content originates from or is modified by AI. This requirement applies regardless of any human involvement in refining or altering the content. The goal is to uphold transparency, especially for high-risk AI systems. :::

Related Posts

Multi-Framework Policy Management: Key Practices

Centralize policies, map overlapping controls, and use AI to automate multi-framework compliance, reducing audits and costs.

ISO 27001 vs SOC2: Key Differences for Startups

Compare ISO 27001 and SOC 2 for startups: scope, validation, costs, timelines, and which to choose for U.S. or international growth.

Generic AI vs Domain-Specific AI for Compliance

Compare generic vs domain-specific AI for compliance: accuracy, data residency, audit readiness, and reduced audit risk.