Generic AI vs Domain-Specific AI for Compliance

Compare generic vs domain-specific AI for compliance: accuracy, data residency, audit readiness, and reduced audit risk.

Generic AI vs Domain-Specific AI for Compliance

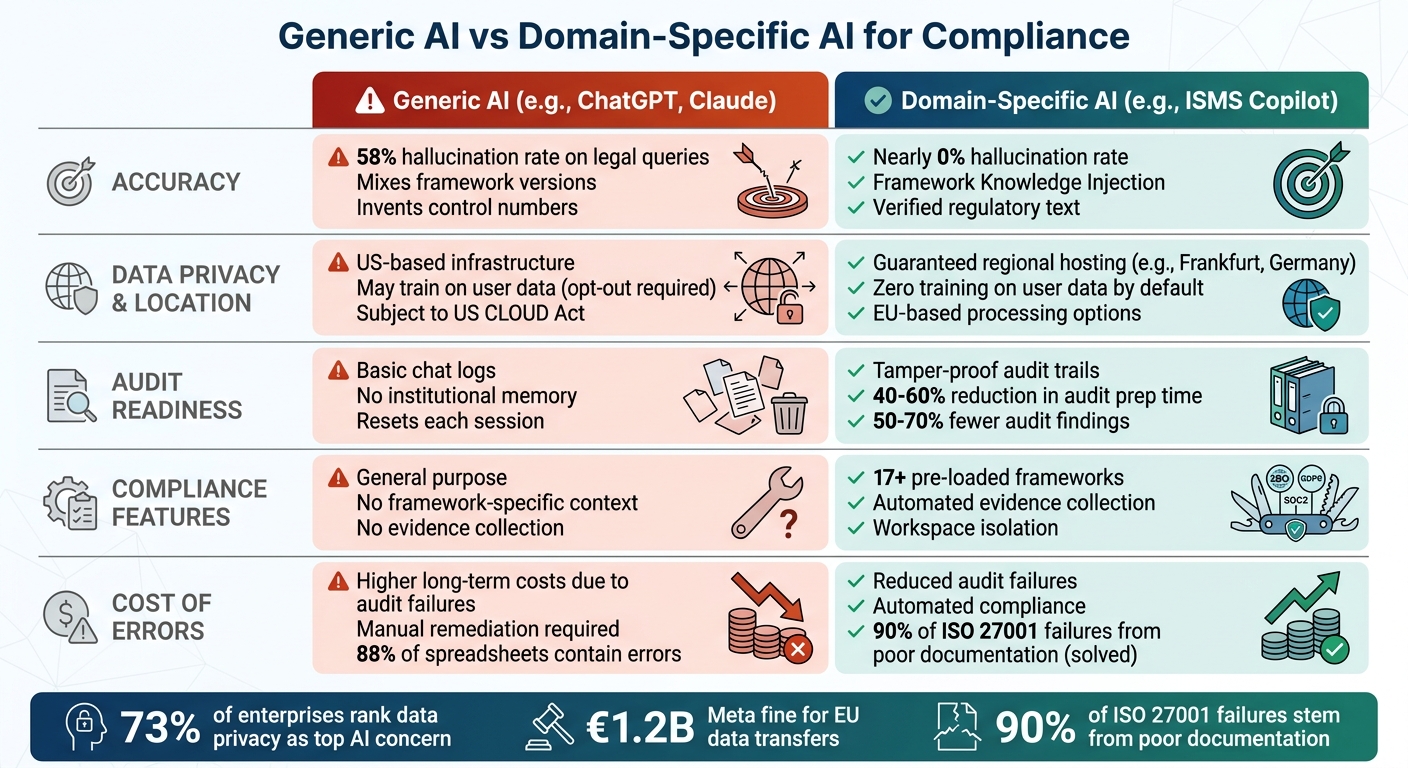

When it comes to compliance tasks, choosing the right AI tool can make or break your efforts. Should you use a generic AI tool like ChatGPT, or opt for a domain-specific AI platform? Here's the bottom line:

- Generic AI tools are versatile but often lack the precision required for compliance. They rely on broad datasets, leading to inaccuracies (e.g., a 58% hallucination rate for legal queries). They also pose risks like data privacy breaches due to US-based processing.

- Domain-specific AI tools, like ISMS Copilot, are tailored for compliance work. They use verified regulatory data, ensure data residency (e.g., EU-based servers), and include features like audit trails and framework-specific accuracy.

Key Differences:

- Accuracy: Domain-specific AI minimizes errors by grounding responses in verified standards, unlike generic AI, which may generate fabricated or outdated information.

- Data Privacy: Domain-specific platforms prioritize regional data storage and sovereignty. Generic AI often processes data in the US, creating GDPR and cross-border compliance risks.

- Audit Readiness: Domain-specific tools provide structured, tamper-proof documentation and continuous evidence collection - something generic AI lacks.

Quick Takeaway: If compliance is critical to your organization, domain-specific AI is the safer, more reliable choice.

::: @figure  {Generic AI vs Domain-Specific AI for Compliance: Key Differences}

:::

{Generic AI vs Domain-Specific AI for Compliance: Key Differences}

:::

AI Security and Risk: Side-by-side Comparison of AI Compliance and Risk Frameworks

::: @iframe https://www.youtube.com/embed/wkEEK8Wp2WM :::

Why Generic AI Falls Short for Security Compliance

Generic AI tools, while great for general productivity, struggle when it comes to the precision and reliability needed for regulated compliance work. These models generate text by predicting the next most likely word rather than pulling from verified facts [8]. This can lead to outputs that sound convincing but are factually incorrect - a major risk in compliance, where accuracy is non-negotiable. Let’s break down the key issues.

Inaccurate Outputs and Hallucinations

Studies reveal that as much as 46% of AI-generated text contains hallucinations. When tested with specific legal questions, GPT-4 was found to hallucinate at least 58% of the time [4][9]. For compliance professionals, this level of inaccuracy is unacceptable. The consequences can be severe:

- A U.S. lawyer was sanctioned after submitting fictional case citations produced by ChatGPT.

- Air Canada had to honor a fabricated "bereavement fare" policy created by its AI chatbot [4].

Generic AI also struggles to distinguish between outdated and current regulatory standards, creating dangerous compliance gaps. For example, it might mix up ISO 27001:2013 and ISO 27001:2022 requirements, invent control numbers that don’t exist, or incorrectly assert that ISO 27001 mandates a SOC 2 Type II audit.

J.P. Roe, a professional writer, summed it up well:

"The AI doesn't get the context - like at all... I personally would not use it for anything legally load-bearing unless I had a compliance expert coming behind it to fix the mistakes" [10].

Research shows that 90% of ISO 27001 failures stem from poor documentation management and the inability to prove controls exist [4]. Generic AI lacks the ability to generate the verifiable evidence needed to meet these standards. And beyond accuracy, issues around data privacy further expose its limitations.

Data Privacy and Location Problems

Generic AI tools also pose significant risks to data privacy and cross-border data transfers. Even when providers claim to store data within the EU or UK, the inference step (where GPUs process input) often happens in the United States [6]. This creates compliance challenges for organizations bound by GDPR Chapter V and the Schrems II ruling.

Geoff Davies from PivotalEdge explained:

"Residency is strongest for stored data. If you need strict UK-only processing for everything, check how OpenAI handles inference... because not all workflows can guarantee UK-only processing" [6].

This is particularly concerning because US-based AI providers are subject to American surveillance laws, which can conflict with European data protection standards. The risks are real: in April 2023, Samsung engineers accidentally leaked proprietary source code and internal meeting notes into ChatGPT while using it for summarization and debugging - three separate incidents in just 20 days. In response, Samsung implemented a company-wide ban on generative AI for internal use [4].

Missing Specialized Compliance Knowledge

Generic AI tools also fall short when it comes to understanding specific compliance frameworks or an organization’s unique control environment, audit history, or risk profile. While these tools can draft policies, they cannot produce the objective, timestamped, tamper-proof documentation that auditors require. As Humadroid put it:

"Auditors don't evaluate whether your controls sound good. They evaluate whether your controls demonstrably operated across an entire audit period" [4].

Another common issue: generic AI often confuses legal requirements with best practices. For instance, it might incorrectly claim that AES-256 encryption is a legal mandate when regulations simply require "appropriate security measures" based on a risk assessment. This over-prescription can lead to unnecessary costs and efforts.

These gaps highlight the need for the best AI assistant for ISO 27001 that provides persistent, framework-specific context and can adapt to the nuances of compliance work - something generic AI just isn’t built to handle.

How Domain-Specific AI Addresses Compliance Needs

Domain-specific AI relies on verified regulatory data rather than unpredictable internet patterns. This approach ensures that responses are grounded in structured, curated knowledge - essential when precision is non-negotiable. Here's how it tackles key compliance challenges.

Pre-Loaded Regulatory Frameworks

Unlike general-purpose AI, domain-specific tools come equipped with pre-loaded regulatory frameworks to ensure accuracy. For instance, ISMS Copilot uses a system called "Dynamic Framework Knowledge Injection", which eliminates guesswork. Mention a standard like ISO 27001 or SOC 2, and the system immediately identifies it using pattern matching. It then loads the relevant controls, clauses, and requirements into its context before generating a response. This process, which takes just 5–15 seconds, drastically reduces errors in framework-specific queries [11][12][14].

The information is sourced from curated databases verified by Governance, Risk, and Compliance (GRC) engineers against official standards [12][13]. Currently, ISMS Copilot supports 17 frameworks, including ISO 27001:2022, ISO 42001:2023, SOC 2, HIPAA, GDPR, NIS 2, DORA, and the EU AI Act. Every response includes specific citations to these frameworks, making the outputs ready for audits from the start [11][12][13].

Complete Audit Trails and Decision Tracking

Generic AI resets with each conversation, but domain-specific platforms maintain institutional memory over time - something auditors value. This is especially important given that 90% of ISO 27001 failures result from poor documentation rather than missing technical controls [4].

ISMS Copilot offers features like change tracking, separate workspaces for different projects or clients, and PII Reduction Mode, which automatically redacts personal information like names, emails, and phone numbers before processing. Users can also configure data retention periods, from as short as 1 day to as long as 7 years, to align with regulatory or internal policies [13].

Data Location Control and Deployment Flexibility

For organizations bound by strict data sovereignty rules, domain-specific AI ensures compliance through regional hosting guarantees. For example, ISMS Copilot stores all data in Frankfurt, Germany, and offers an Advanced Data Protection mode that routes queries exclusively through EU-based AI providers like Mistral AI [13][14]. This ensures adherence to GDPR and other data protection laws.

All backend providers operate under Zero Data Retention (ZDR) agreements, meaning that user prompts and outputs are never stored long-term or used for model training [13][14]. For organizations with even stricter requirements, some platforms allow deployment on-premises, in private clouds, or on edge infrastructure, ensuring data remains entirely within the organization's control [15]. This level of data management helps organizations meet even the most rigorous compliance standards.

Data Privacy and Localization: The Main Difference

When it comes to compliance, where data is stored and who can access it are critical concerns. Generic AI tools like ChatGPT often process data through US-based infrastructure, which can create problems for companies subject to European, Chinese, or industry-specific regulations. Domain-specific AI, on the other hand, is designed with data localization as a priority.

The distinction between data privacy and localization is a major factor separating domain-specific AI from generic solutions. In fact, 73% of enterprises rank data privacy and security as their top concern in AI, and 77% now consider a vendor’s country of origin before making purchasing decisions [17]. These concerns aren’t just theoretical - regulators have taken action. For example:

- Meta was fined €1.2 billion in 2023 for transferring EU user data to the United States.

- TikTok faced a €530 million penalty in 2025 for sending EU citizen data to servers in China.

- Uber paid €290 million in 2024 for moving driver records across borders [17][19].

These cases underline the importance of how domain-specific AI redefines data storage, processing controls, and cross-border risk management.

Meeting Regional Data Storage Laws

Domain-specific AI platforms carefully address both data residency (where servers are physically located) and data sovereignty (the legal framework governing that data). Simply selecting an "EU region" from a US-based cloud provider doesn’t necessarily ensure compliance. As Jaipal Singh explains:

"The legal jurisdiction follows the company, not the data center" [17].

Take the EU AI Act Copilot as an example. It stores data in Frankfurt, Germany, and includes an Advanced Data Protection mode that routes queries exclusively through EU-based providers. All backend providers operate under Zero Data Retention (ZDR) agreements, meaning prompts and outputs aren’t saved or used for model training [1]. This setup aligns with GDPR requirements and helps organizations navigate uncertainties, especially after the collapse of the EU-U.S. Data Privacy Framework (often called "Schrems III") in late 2025 [18].

The stakes are high. Under the EU AI Act, penalties can reach 7% of global annual turnover or €35 million, and combined violations of GDPR and the AI Act could theoretically hit 11% of global turnover [18]. By 2026, at least 34 countries had introduced or strengthened data localization laws impacting AI processing [18].

Reducing Cross-Border Data Risks

Even when data is stored regionally, cross-border data flows can introduce additional compliance risks. Generic AI APIs often create hidden data flows through secondary pathways, such as content moderation, safety checks, or logging. These touchpoints may fall under different legal jurisdictions. Additionally, many generic providers reserve the right to use API inputs for model training unless users explicitly opt out [18].

Domain-specific AI minimizes these risks with intentional design. For instance, some platforms use an "Insights Not Data" approach, where data is pre-processed locally to remove or pseudonymize personal identifiers. Only anonymized insights are sent to cloud-based models [18]. Others offer workspace isolation, ensuring that data from different clients or projects remains separate [1].

This thoughtful approach also saves time and money. Implementing AI-powered data residency can take just 2–3 weeks, compared to 4–6 months with manual methods. Costs drop significantly too, from an estimated $150,000–$250,000 to $40,000–$80,000. Automated validation ensures 100% accuracy, whereas manual reviews carry a 15–20% error rate [16].

Clear Data Processing and Storage Controls

Domain-specific AI platforms provide transparency and control over every step of data processing. While generic AI often operates as a "black box", domain-specific solutions reveal the entire processing chain. For example, ISMS Copilot allows organizations to configure data retention settings to meet both regulatory and internal policies. It also includes features to automatically redact sensitive personal information during processing. Some platforms even offer deployment options like on-premises, private cloud, or edge setups to keep data fully under the organization’s control.

| Factor | Domain-Specific AI (e.g., ISMS Copilot) | Generic AI (e.g., ChatGPT) |

|---|---|---|

| Data Location | Guaranteed regional (e.g., Frankfurt, Germany) [1] | Typically US-based infrastructure [1] |

| Model Training | Zero training on user data by default [1] | May train on inputs (opt-out required) [1] |

| Jurisdiction | Often non-US or sovereign-cloud based [17] | Subject to US CLOUD Act [17] |

| Data Isolation | Isolated workspaces for multi-client use [1] | Simple conversation threads; risk of data crossover [1] |

As Prem AI aptly puts it:

"The era of 'move fast and figure out compliance later' is over for AI" [19].

Organizations that prioritize data location and compliance from the start - not as an afterthought - are better equipped to pass audits and avoid penalties. This strong foundation for data control directly impacts performance, which the next section explores through accuracy metrics and audit readiness.

Performance Comparison: Generic AI vs Domain-Specific AI

When it comes to compliance tasks, the performance gaps between generic AI and domain-specific solutions are hard to ignore. While generic AI models like ChatGPT, Claude, and Gemini are great for general tasks, they often fall short in areas requiring precision, detailed audit trails, and robust documentation. On the other hand, domain-specific platforms, such as ISMS Copilot, are specifically designed to tackle these challenges head-on.

Compliance Gap Detection Accuracy

Generic AI models base their predictions on broad datasets, which often leads to accuracy problems in compliance work. A 2024 Stanford study revealed that GPT-4 "hallucinated" - or generated incorrect information - 58% of the time when responding to verifiable legal questions [4]. These errors include:

- Inventing control numbers.

- Mixing up framework versions.

- Providing overly rigid or inaccurate mandates.

Domain-specific AI addresses these issues through a method called Framework Knowledge Injection, which ensures responses are grounded in verified regulatory text. For example, ISMS Copilot v2.5 uses this approach to virtually eliminate fabricated requirements across more than 50 regulatory frameworks [1][2].

| Accuracy Factor | Domain-Specific AI (e.g., ISMS Copilot) | Generic AI (e.g., ChatGPT, Claude) |

|---|---|---|

| Hallucination Rate | Nearly eliminated for framework queries [1][2] | 58–88% on domain-specific queries [4] |

| Framework Version | Clear distinctions (e.g., ISO 27001:2013 vs. 2022) [1] | Frequently mixes versions [4] |

| Control Accuracy | Based on verified regulatory text [1][2] | Prone to inventing or misquoting controls [4] |

| Institutional Memory | Retains organizational control environments over time [4] | Resets with each session, lacking persistence [4] |

These discrepancies highlight the importance of accuracy and proper documentation, which are essential for compliance and audit readiness.

Audit Records and Decision Documentation

Audit preparation is another area where domain-specific AI shines. As Humadroid puts it:

"Auditors don't evaluate whether your controls sound good. They evaluate whether your controls demonstrably operated across an entire audit period." [4]

Generic AI typically generates basic chat logs, which offer little value as audit evidence. In contrast, domain-specific platforms provide tamper-resistant audit logs and automated evidence collection through integrations with tools like AWS and GitHub. This not only strengthens audit readiness but also drastically cuts down preparation time and reduces audit findings [3][4].

Here’s the impact in numbers:

- Companies using automated compliance platforms report a 40–60% reduction in audit preparation time.

- They also see 50–70% fewer audit findings in their first external audit [4].

Additionally, manual compliance management often leads to costly errors, with 88% of spreadsheets containing mistakes and 90% of ISO 27001 failures stemming from poor documentation rather than missing technical controls [4].

Industry-Specific Applications

The advantages of domain-specific AI extend beyond accuracy and audit trails, especially in regulated industries. For instance:

- ISO 27001: Specialized platforms automate mapping for the 2022 control set, while generic AI often references outdated 2013 controls or even non-existent ones [4].

- GDPR: Domain-specific tools offer detailed gap analyses and EU data residency, whereas generic AI relies on US-based infrastructure and provides only surface-level advice [1][4].

- SOC 2: Platforms like ISMS Copilot enable continuous evidence collection via API integrations, proving control operation over time - something generic AI cannot achieve [4].

In healthcare, the stakes are even higher. Generic AI models, particularly free or lower-tier versions, pose a significant risk of leaking Protected Health Information (PHI) to training datasets [4]. Domain-specific tools mitigate this by embedding healthcare-specific knowledge and ensuring zero training on user data by default [1][4]. As ISACA warns:

"GRC teams must be careful about sharing proprietary company data with AI models." [4]

Cost Considerations

At first glance, generic AI might seem like the budget-friendly option. For example, ChatGPT Plus costs $20 per month. However, the lack of framework-specific accuracy and reliable audit trails often leads to higher costs in the long run due to audit failures, remediation efforts, and increased manual documentation. Comparatively, ISMS Copilot offers plans starting at $24/month, with Pro and Business tiers at $100/month and $250/month, respectively [1]. For organizations prioritizing compliance, the added cost of domain-specific AI can save significant time, effort, and resources.

Conclusion: Selecting the Right AI for Security Compliance

Main Points

When deciding between generic AI and domain-specific AI for compliance tasks, the key considerations are accuracy, data control, and audit readiness. While tools like ChatGPT and Claude perform well for general tasks, they fall short in compliance-specific applications. Their high hallucination rates can pose significant compliance risks and fail to provide the robust audit trails necessary for regulatory requirements [4].

In contrast, domain-specific tools like ISMS Copilot leverage Framework Knowledge Injection to deliver verified, regulation-focused answers [1][2]. These tools ensure strict data residency guarantees (e.g., Frankfurt, Germany for GDPR compliance), default to zero training on user data, offer workspace isolation for multi-client scenarios, and provide tamper-proof audit trails with automated evidence collection. These features are critical for audit preparation, including the [essential steps to ISO 27001 certification], with users reporting a 40–60% reduction in audit prep time and 50–70% fewer audit findings [4].

Such differences highlight the importance of selecting a tool tailored to meet stringent compliance needs.

How to Choose the Right Solution

To make an informed choice, consider the following steps:

-

Assess Data Sovereignty Requirements: If your organization processes EU citizen data or operates in highly regulated sectors like healthcare or finance, confirm that the AI tool adheres to jurisdictional requirements for data processing and storage [1][7]. Generic AI systems often process data in the United States, even if storage occurs elsewhere [5].

-

Evaluate Framework Accuracy: Ensure the tool can differentiate between versions of standards (e.g., ISO 27001:2013 vs. ISO 27001:2022) and avoid errors like fabricated control numbers [4][7]. Platforms using Retrieval-Augmented Generation with proper citations are more reliable [20].

-

Prioritize Audit Evidence Capabilities: As Humadroid emphasizes:

"Auditors don't evaluate whether your controls sound good. They evaluate whether your controls demonstrably operated across an entire audit period" [4].

Look for tools that integrate seamlessly with your technical stack (e.g., AWS, GitHub, HRIS) to enable continuous monitoring and structured documentation instead of just generating conversational text.

For compliance-focused organizations, spending an additional $80 per month on a specialized tool can lead to significant savings in audit remediation and staffing costs [1][4].

FAQs

::: faq

When is generic AI 'good enough' for compliance work?

Generic AI tends to perform adequately for compliance tasks that are low-risk, routine, and don’t demand specialized expertise or strict data privacy protocols. However, when it comes to tasks requiring domain-specific precision, it often falls short. Issues like inaccuracies or "hallucinations" can arise, making it unreliable for high-stakes compliance scenarios where accuracy and trust are non-negotiable. :::

::: faq

How can I verify an AI’s compliance answers won’t hallucinate?

When using AI for compliance-related tasks, it's crucial to verify its responses against official standards. This can help ensure the information provided is trustworthy and aligns with established guidelines.

Here are some strategies to improve accuracy:

- Request Citations: Always ask the AI to provide sources for its answers. This allows you to trace the information back to reliable references.

- Encourage Transparency: Ask the AI to acknowledge uncertainty if it’s unsure about an answer. This can help prevent overconfidence in potentially inaccurate responses.

- Simplify Complex Queries: Break down your questions into smaller, more manageable parts. This reduces the chance of misinterpretation or errors in the response.

Additionally, tools like ISMS Copilot can be valuable. This tool integrates verified knowledge to reduce inaccuracies, often referred to as hallucinations, and enhances the reliability of compliance-related outputs. :::

::: faq

What should I ask vendors about data residency and processing location?

When you're considering vendors for AI tools like ISMS Copilot, it's important to ask about where your data is stored and processed. Make sure their practices align with your region's regulations and your organization's privacy standards. If you're in a highly regulated industry, check whether the vendor offers data localization or data sovereignty options. Also, confirm their compliance with frameworks like GDPR or other relevant local laws. This way, you can ensure your data stays secure and meets all necessary legal requirements. :::

Related Posts

Unified Control Mapping Across Frameworks: Best Practices

Consolidate overlapping framework requirements into a single control library to cut audit time and centralize evidence.

AI-Powered Compliance Monitoring: How It Works

How ML, NLP, and data integration enable 24/7 compliance monitoring, evidence reuse, risk scoring, and automated remediation.

How Predictive Analytics Simplifies Multi-Framework Compliance

Use AI-driven predictive analytics to map overlapping controls, prioritize risks, and reuse evidence across ISO 27001, SOC 2, and NIS 2.