EU AI Act: Robustness Testing Requirements Explained

Explains Article 15 requirements for robustness testing of high-risk AI: tests, documentation, monitoring, and compliance timelines.

EU AI Act: Robustness Testing Requirements Explained

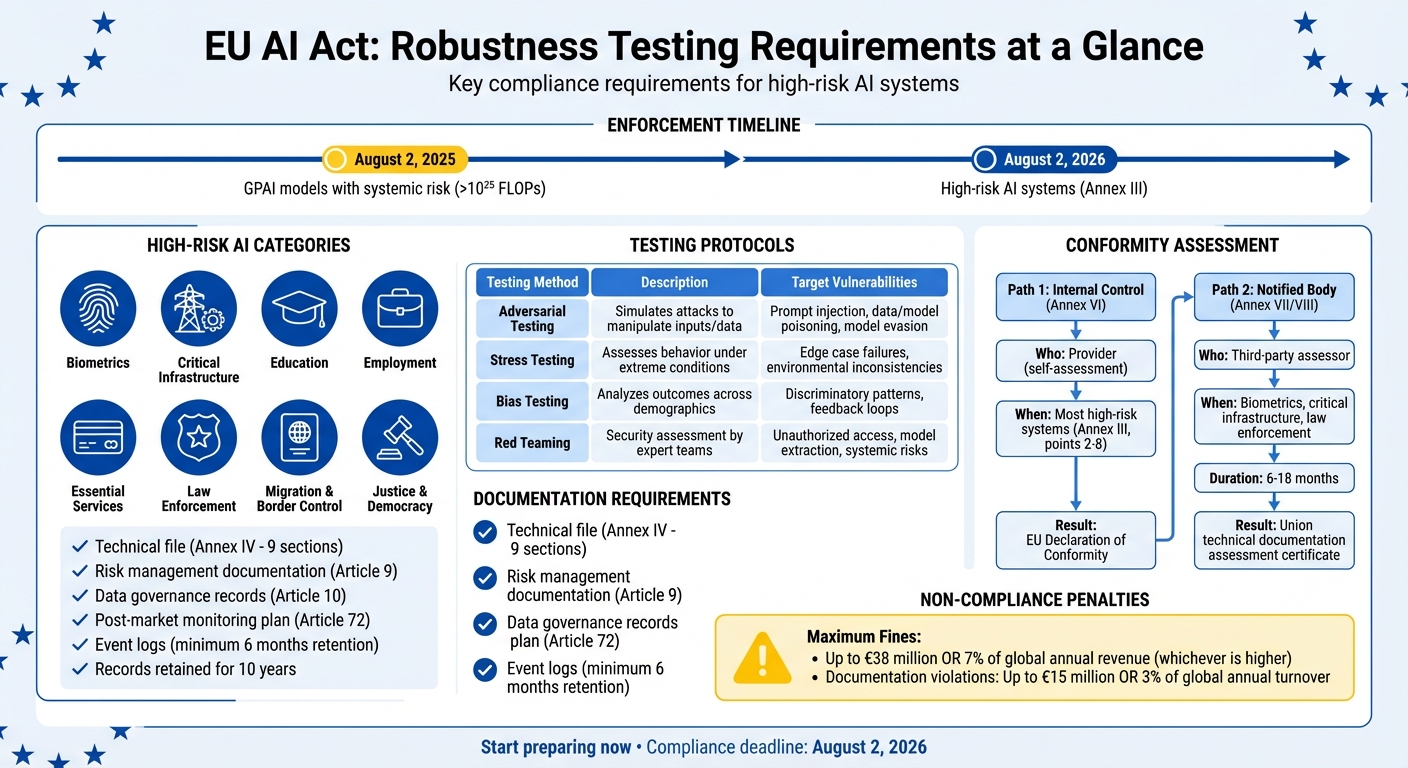

The EU AI Act mandates that high-risk AI systems undergo rigorous robustness testing to ensure reliable performance under errors, faults, or unexpected conditions. This requirement, detailed in Article 15, applies to systems in critical sectors like healthcare, transportation, and law enforcement. Here's what you need to know:

- Robustness Defined: The ability of AI systems to maintain consistent performance despite disruptions, as outlined in ISO/IEC 25059:2023.

- High-Risk AI Systems: Includes AI in biometrics, critical infrastructure, education, employment, and more, as listed in Annex III. Enforcement begins August 2, 2026.

- Testing Protocols: Includes adversarial testing, stress testing, and red teaming to identify vulnerabilities.

- Documentation: Providers must prepare detailed technical files, conduct conformity assessments, and retain records for 10 years.

- Post-Deployment Monitoring: Continuous performance tracking and safeguards against harmful feedback loops are required.

Failing to follow the EU AI Act compliance checklist can result in fines up to $38 million or 7% of global annual revenue. Start preparing now to meet these requirements before the enforcement deadline.

::: @figure  {EU AI Act Robustness Testing Requirements and Compliance Timeline}

:::

{EU AI Act Robustness Testing Requirements and Compliance Timeline}

:::

High-Risk AI Systems and Applicability

What Qualifies as a High-Risk AI System?

The EU AI Act defines high-risk AI systems through two main approaches. First, it includes AI systems that act as safety components in products governed by EU harmonization laws (listed in Annex I). Examples include machinery, medical devices, and automotive systems where a failure could pose health or safety risks [7]. Second, it covers systems outlined in Annex III, spanning eight areas that could impact fundamental rights [7][8].

These eight Annex III categories are:

- Biometrics: Systems like remote identification and emotion recognition.

- Critical Infrastructure: Tools for managing road traffic or utilities (e.g., water, gas, electricity).

- Education: AI used in admissions decisions or exam monitoring.

- Employment: Applications such as resume screening, promotion decisions, and employee monitoring.

- Essential Services: Systems for credit scoring or insurance pricing.

- Law Enforcement: Tools like polygraphs or recidivism prediction models.

- Migration and Border Control: AI used in visa processing.

- Justice and Democracy: Systems developed to assist judicial authorities [7][8].

However, not all systems in these categories automatically qualify as high-risk. Article 6 allows for exemptions if a system performs narrowly defined tasks or supports human-led activities without significantly influencing decisions - provided profiling is not involved. To claim this exemption, organizations must document their evaluation using a policy assistant and register the system in the EU database before market entry [8]. On the other hand, if an AI system profiles individuals within an Annex III domain, it remains classified as high-risk [8].

For all high-risk systems, Article 15 mandates robustness testing to ensure reliable performance throughout their lifecycle [4]. Enforcement of these requirements for Annex III systems begins on August 2, 2026 [8].

This classification framework underpins the stringent robustness standards required by the EU AI Act.

General-Purpose AI and Systemic Risk

The Act also addresses general-purpose AI (GPAI) models, which are not inherently high-risk. However, when these models are integrated into high-risk systems - such as using a large language model in recruitment or critical infrastructure - they must comply with high-risk standards [9][8]. Additionally, GPAI models identified as posing "systemic risk" are subject to specific robustness requirements, including adversarial testing [9].

To qualify as systemic risk, the model’s cumulative training computational power must exceed 10^25 FLOPs [9]. Like other high-risk systems, these models must undergo detailed evaluations and adversarial testing to detect vulnerabilities [6]. The rules for GPAI models come into effect on August 2, 2025, a year earlier than the broader high-risk system requirements [9].

Technical and Organizational Measures for Robustness

Building Technical Resilience

Article 15 of the EU AI Act emphasizes the importance of resilience in high-risk AI systems, ensuring they can withstand operational disruptions. These systems must handle errors, faults, or inconsistencies within their environment, even when faced with hardware failures, corrupted inputs, or unexpected user behavior [1].

One key approach to achieving resilience is technical redundancy. For instance, containerization can isolate system operations, maintaining reliability even when infrastructure issues arise [2]. In critical settings, designing for graceful degradation - where the system transitions to manual operations or backup systems instead of shutting down entirely - adds another layer of reliability.

AI-specific vulnerabilities also demand attention. Threats like data poisoning (tampering with training data), model poisoning (embedding malicious code during training), and adversarial examples (inputs designed to mislead the AI) require strong defenses [2]. The MITRE ATLAS framework provides tools to test and mitigate these risks [2]. In addition, automated monitoring and alerting systems can identify performance issues or availability problems in real time. Specialized tools like ISMS Copilot One can help automate these complex compliance workflows.

For AI systems that continue learning post-deployment, feedback loops pose unique challenges. If biased outputs feed into subsequent training data, those biases can compound. To address this, measures like selective data curation and limiting the integration of real-world feedback are essential [1]. Event logging, as required under Article 12, helps track performance changes and detect potential issues [8].

These technical safeguards work in tandem with organizational measures to ensure systems remain fair and unbiased.

Addressing Bias and Fairness

Mitigating bias is not just an ethical responsibility but also a legal obligation under the Act. High-risk AI systems must operate fairly across all demographic groups, particularly in sensitive areas like employment, education, or access to essential services [8].

Regular fairness audits are crucial. For example, if a resume screening tool fails to account for diverse career paths or educational backgrounds, it could unfairly disadvantage certain groups. Such violations could lead to penalties of up to $35 million or 7% of global annual turnover [10].

Human oversight, as mandated by Article 14, is another critical component. Humans must understand the system's limitations, avoid blindly trusting its outputs (automation bias), and intervene when needed [8]. One effective approach is an advisory-only design, where AI outputs are reviewed by humans before any action is taken.

To address bias systematically, establish a Quality Management System (QMS) under Article 17. This should include written policies for identifying and correcting bias, along with ongoing risk management throughout the AI system's lifecycle. Post-market monitoring is essential for evaluating real-world performance, while automated logs - retained for at least six months - create a data trail for audits and incident investigations [8].

Testing and Validation for Robustness

Robustness Testing Protocols

To ensure AI systems are prepared for deployment, the EU AI Act mandates rigorous pre-deployment testing, especially for high-risk systems. These tests are designed to confirm resilience against errors, faults, and potential attacks [1].

Adversarial testing is a key requirement for general-purpose AI systems with systemic risks, as well as high-risk systems. This approach involves simulating attacks like prompt injection, data poisoning, and model evasion to evaluate how well the system can withstand such threats [10][11]. Another critical method is red teaming, where internal or external experts actively attempt to breach the system, providing a comprehensive security evaluation [10].

Stress testing evaluates how systems handle extreme or unusual scenarios, such as edge cases or intentional fault injections, ensuring that failures occur in a controlled and predictable manner [10][11].

| Testing Method | Description | Target Vulnerabilities |

|---|---|---|

| Adversarial Testing | Simulates attacks to manipulate inputs or training data | Prompt injection, data/model poisoning, model evasion [10][3] |

| Stress Testing | Assesses behavior under extreme or unusual conditions | Edge case failures, environmental inconsistencies [11][8] |

| Bias Testing | Analyzes outcomes across demographic groups | Discriminatory patterns, feedback loops [11] |

| Red Teaming | Security assessment by internal or external teams | Unauthorized access, model extraction, systemic risks [10] |

To minimize bias in outputs, testing datasets must be carefully curated to be relevant, representative, and as error-free as possible [1][11]. Additionally, providers are required to declare specific accuracy and resilience benchmarks in their user instructions, aligning with EU Commission standards [1][3].

"Quality assurance is no longer optional - it's the foundation of regulatory compliance. Organizations need to prove their AI systems are safe, fair, and reliable before they can enter the EU market."

Once these pre-deployment protocols are completed, ongoing monitoring ensures that the systems remain robust after deployment.

Continuous Monitoring After Deployment

Testing doesn’t end when an AI system is deployed. According to Article 72, providers must implement post-market monitoring systems that continuously gather, document, and analyze performance data throughout the system's lifecycle [4][12]. This ensures the system adapts to evolving real-world conditions while maintaining its integrity.

High-risk AI systems are required to log events for at least six months. These logs help track performance, identify potential risks, and document significant changes [6][8][12]. Tools like anomaly detection can flag unexpected data shifts or noise that might undermine the system's robustness [2][5].

For systems that learn continuously, safeguards must be in place to prevent feedback loops, where biased outputs could influence future training data [4][2][1]. Monitoring tools track performance deterioration over time, while containerization ensures stable and reproducible environments for system execution [2].

Both providers and deployers are obligated to report incidents [6][12]. Additionally, human oversight mechanisms, such as "stop button" procedures, allow for immediate intervention if problems arise [8].

"The EU AI Act requires continuous monitoring after deployment, not just one-time testing. High-risk AI systems need automated logging and incident response built in from the start."

- Oleg Sivograkov, VP of IT Operations, TestFort [11]

The enforcement date for these requirements is set for August 2, 2026 [8]. Failing to comply could result in severe penalties, including fines of up to $38 million or 7% of global annual revenue [10].

Part 2: Navigating the EU AI Act: Compliance Essentials for High-Risk AI Systems

::: @iframe https://www.youtube.com/embed/wsr6d6WG5Uo :::

Documentation and Compliance Reporting

After rigorous testing and ongoing monitoring, proper documentation is essential to ensure compliance is maintained.

Technical Documentation Standards

Before introducing a product to the market, you must prepare a technical file that meets Annex IV standards. This file should clearly demonstrate adherence to Article 15's robustness requirements [13]. The file must include nine sections, with Section 4 focusing specifically on performance metrics. It should detail robustness test results, validation methods, and the reasoning behind chosen metrics [13].

Creating this documentation can take between 40–80 hours, provided compliance data is already integrated into the development process using an AI implementation assistant [13]. Tools like MLflow can streamline this process by capturing logs, audit trails, and design decisions directly within your workflow.

Your technical file should also include the following:

- Risk management documentation (Article 9)

- Data governance records (Article 10) explaining how datasets were used to assess bias

- A post-market monitoring plan (Article 72)

- Instructions outlining robustness levels and limitations [1]

All technical documentation must be retained for 10 years after the product enters the market. Non-compliance can result in penalties of up to €15 million or 3% of global annual turnover [13].

Conformity Assessments and CE Marking

Once the technical file is complete, the next step is proving compliance through a conformity assessment. Most high-risk AI systems can be self-assessed under the Internal Control process outlined in Annex VI [15]. However, systems used in sensitive areas like biometrics, critical infrastructure, or law enforcement require an external evaluation by a notified body. These third-party assessors are authorized to review your documentation and ensure compliance [14].

Notified bodies may request additional evidence or conduct independent testing if they find your robustness tests insufficient [14]. They will verify that measures like redundancy, backup plans, and fail-safes are documented and implemented [1]. For AI systems that continue learning post-deployment, they will also check safeguards against feedback loops that could introduce bias into future training data [1].

| Assessment Type | Who Conducts It | When Required | Certificate Issued |

|---|---|---|---|

| Internal Control (Annex VI) | Provider (self-assessment) | Most high-risk systems (Annex III, points 2-8) | EU Declaration of Conformity |

| Notified Body (Annex VII/VIII) | Third-party assessor | Biometrics, critical infrastructure, law enforcement | Union technical documentation assessment certificate |

Given that assessments by notified bodies can take 6–18 months, providers of safety-critical or biometric systems should initiate the process well before the August 2, 2026 deadline [16]. Be ready to provide access to training, validation, and testing datasets via API or other technical means if requested [14].

After successfully completing the assessment, you must issue an EU Declaration of Conformity and apply the CE marking to your system or its documentation [15]. This marking serves as legal proof that your system meets robustness standards. If any significant changes are made - such as modifications that affect compliance or the system's intended purpose - you’ll need to update the documentation and possibly undergo a new conformity assessment [13].

"The technical documentation shall be drawn up in such a way as to demonstrate that the high-risk AI system complies with the requirements [...] and to provide national competent authorities and notified bodies with the necessary information in a clear and comprehensive form to assess the compliance."

- Article 11, Regulation (EU) 2024/1689 [13]

Conformity assessments, paired with continuous monitoring, form a complete compliance framework, ensuring high-risk AI systems meet robustness standards throughout their lifecycle.

Robustness vs. Cybersecurity: Key Differences

Let’s break down how the EU AI Act differentiates robustness from cybersecurity - two concepts that might seem similar but serve very different purposes.

The distinction lies in intent. Robustness, as outlined in Article 15[1], deals with unintentional disruptions. These could include natural errors, faults, or inconsistencies that arise during regular system operation. On the other hand, cybersecurity, covered in Article 15[3], focuses on intentional, malicious attacks. This includes scenarios where unauthorized third parties actively try to manipulate or compromise system performance. While earlier sections covered how to test for robustness, this part highlights the divide between internal resilience and external threats.

In technical terms, robustness in machine learning refers to non-adversarial resilience. This involves testing an AI system’s ability to handle challenges like distribution shifts, environmental changes, or feedback loops - where model outputs influence future training data. Cybersecurity, however, focuses on adversarial resilience, protecting against threats like data poisoning, evasion attacks, or even breaches that compromise model confidentiality, such as theft.

Henrik Nolte from the University of Tübingen explains this divide clearly:

"The AIA artificially splits the CSA's concept of cybersecurity by designating unintentional causes as a matter of robustness and restricting cybersecurity to intentional actions."

For compliance, both robustness and cybersecurity require different strategies. Organizations can train AI compliance assistants to manage these complexities. Robustness relies on measures like technical redundancy and continuous monitoring to ensure systems can handle unintentional disruptions. Cybersecurity, meanwhile, demands active defenses like threat detection, encryption, and adversarial training to counter deliberate attacks. Maintaining both throughout an AI system's lifecycle involves evaluating every component individually to ensure comprehensive protection.

Conclusion and Key Takeaways

Let’s wrap up by highlighting the essential steps for ensuring your AI systems are reliable and compliant with upcoming regulations.

Robustness testing is a cornerstone for ensuring that high-risk AI systems perform consistently under practical conditions. With the enforcement of Annex III requirements set for August 2, 2026 [8], organizations must act now to establish performance benchmarks, integrate redundancy mechanisms, and implement ongoing monitoring throughout the AI lifecycle.

The concept of robustness is highly dependent on context. You’ll need to define performance metrics that align with your system’s specific purpose and anticipate disruptions such as data drift, environmental changes, or feedback loops. Testing protocols should be tailored to the unique use case, data properties, and deployment environment of each AI system.

Start by setting clear performance baselines under normal conditions. Then, methodically test how your system responds to various challenges or disruptions. Incorporate redundancy measures - such as backup systems and fail-safes - and ensure human oversight mechanisms are in place to intervene when anomalies occur. Additionally, your logging systems should support compliance by providing tamper-proof, append-only audit trails.

Under Article 11, providers are primarily responsible for conducting conformity assessments and preparing technical documentation, while deployers must manage human oversight and maintain robust log retention practices. Both roles share the responsibility of maintaining system robustness throughout the AI system's lifecycle.

Streamlining Compliance with ISMS Copilot

Advanced compliance tools like ISMS Copilot can simplify these critical steps.

ISMS Copilot is designed to assist with EU AI Act compliance by analyzing your existing technical documentation and impact assessments to pinpoint gaps in robustness requirements. The platform helps draft the detailed Article 11 technical documentation that regulators will review, covering everything from system descriptions to monitoring protocols.

For regulatory tasks related to the EU AI Act, the platform leverages Mistral models, which are specifically trained on European regulations. These models provide detailed insights into the Act’s requirements. You can create individual workspaces for each AI system, ensuring clear audit trails and organized compliance efforts. When using the platform, include details like your system’s use case, data types, and risk classification to receive tailored guidance that aligns with your compliance needs.

One standout feature of ISMS Copilot is its gap analysis tool, which is especially helpful for robustness testing. By uploading your current risk assessments and testing protocols, the platform identifies missing components and offers a prioritized remediation plan. This helps you allocate resources efficiently and address critical compliance gaps ahead of the August 2026 deadline.

FAQs

::: faq

How do I know if my AI system is “high-risk” under the EU AI Act?

Under the EU AI Act, your AI system is considered “high-risk” if it meets one of two criteria:

- It functions as a safety component of a product regulated by EU laws listed in Annex I.

- It is applied in high-risk scenarios identified in Annex III, such as credit assessments, recruitment processes, or biometric identification.

These classifications play a key role in defining the compliance obligations for your system. :::

::: faq

What robustness tests should I run before putting a high-risk AI system on the EU market?

Before rolling out a high-risk AI system in the EU, it's essential to perform robustness tests to align with the EU AI Act. These tests focus on three key areas: accuracy, cybersecurity, and bias mitigation. The goal is to ensure the system can handle performance challenges effectively. These evaluations aren't just about compliance - they're crucial for building a system that performs reliably under various conditions. :::

::: faq

What evidence do regulators expect in the Article 11 technical file for robustness?

Regulators mandate that the Article 11 technical file includes thorough technical documentation that aligns with Annex IV standards. This documentation must address nine required sections detailing the AI system's design, development, and lifecycle. It’s crucial that this file is completed before the system hits the market and remains updated throughout its entire lifecycle. :::

Related Posts

Unified Control Mapping Across Frameworks: Best Practices

Consolidate overlapping framework requirements into a single control library to cut audit time and centralize evidence.

AI-Powered Compliance Monitoring: How It Works

How ML, NLP, and data integration enable 24/7 compliance monitoring, evidence reuse, risk scoring, and automated remediation.

How Predictive Analytics Simplifies Multi-Framework Compliance

Use AI-driven predictive analytics to map overlapping controls, prioritize risks, and reuse evidence across ISO 27001, SOC 2, and NIS 2.